Beyond Prompt Engineering – What Do Students Really Need to Learn About AI? – Thomas Lancaster’s Blog

Is prompt engineering really the skill that we should be teaching students to prepare them for the future? I keep reading posts suggesting that prompt engineering is all students will need, and saying students should be submitting copies of their interactions with AI (strictly GenAI) systems like ChatGPT. But is prompt engineering really what we should be focusing in on teaching students about for the future?

Of course, prompt engineering is a valuable skill. Right now, students who can’t write detailed prompts and give machines clear instructions are at a disadvantage. This may particularly impact students for whom English is not their first language. But how long will this remain such a challenge for, with GenAI systems evolving so rapidly, and ways of interfacing with AI emerging that are more natural and intuitive to users.

Imagine a world where instead of typing detailed prompts, we interface with AI suggestions directly inside an editing window, we make decisions by moving our bodies, control AI systems using wearable computing devices, or we speak to these systems using everyday language. The day may not be far away where brain-control interfaces are widely available and we directly control AI systems with just our thoughts. All of this comes before we consider all the AI agents busily interacting in the background.

It’s a world that’s both scary and exciting.

So, what do we need to teach students? I think students need to know how to value emerging AI systems, assess the utility and quality of those systems, and determine the best ways to interact with them. Knowing whether to use a free AI solution, when to choose the $20 a month version, the $200 a month version, or the $2,000 a month version, will be an essential skill. We also need to ensure that students understand how AI models work, and the strengths and weakness of those models in their academic field, and in their planned future careers.

Where prompt engineering remains, the mechanisms that students will have to demonstrate they are proficient with in their fields will need to be reviewed. The expected standard of a prompt engineer will be much higher than it is today.

Proficiencies such as multi-modal prompting will need to be demonstrated during assessment, where a student controls a system not only through text, but also by providing visual and audio input, perhaps as prepared files, perhaps even with the system observing the student’s screen. Prompts may need to be chained together to create mega-prompts.

In fields like healthcare, GPs will need to be able to summarise and send vast quantities of information about patients as context to LLMs to receive the best recommendations, although accuracy will always need to be cross-checked.

Then, there are the prompts or techniques needed to control AI agents, which are complex again.

Alongside all of this, we need students to understand the ethical implications of using and relying on AI systems. There are aspects of cognitive psychology to consider in order to get the best out of both humans and AI. Finally, we simply have to help users to avoid information overwhelm.

As I hinted above, we’re part of one of the most exciting periods of change in the world’s history.

About This Post

In the interest of full disclosure, here are some details about how this post came about.

To create this post, I used Gemini 1.5 Pro with Deep Research to identify the future of interfacing with LLMs and GenAI beyond prompt engineering. This isn’t the latest or great Deep Research system, but it did produce a useful nine-page report when exported as a Google Doc, including 32 sources.

Now, I have ideas on this topic beyond the generated report, but this is a useful supplementary guide.

I then used the Google Doc as input for a Gemini 2.0 Flash chat session. Having the referenced information does help to ground future responses and increase their accuracy. I asked for blog post ideas, then the posts themselves, using this content to discuss what to teach instead of prompt engineering in a university setting.

I haven’t used the generated posts, but I have used some of the ideas, enhanced with my own thoughts. The posts were perfectly good, but never sound like me. Working sensibly with GenAI, but with the aid of a knowledgeable human, is incredibly powerful.

The AI image was produced using Dalle-3 inside ChatGPT.

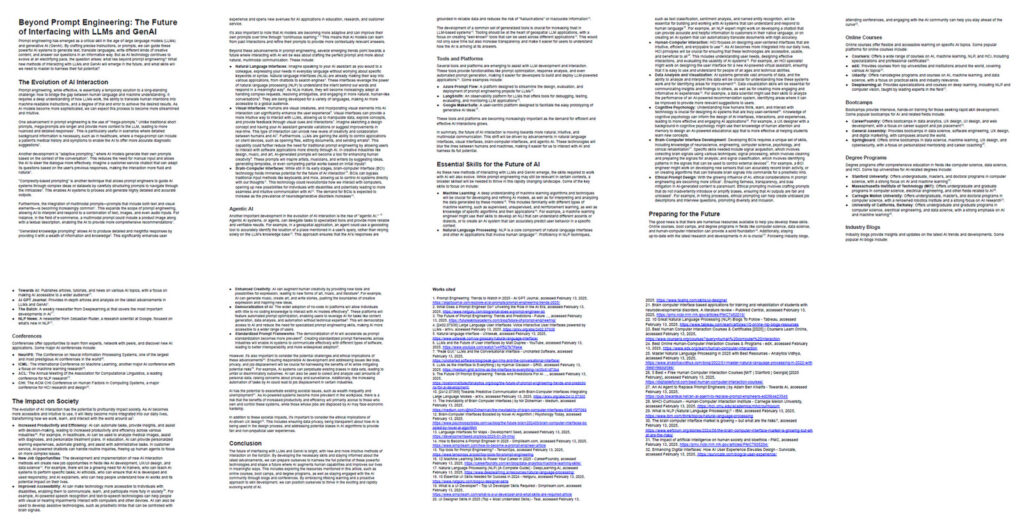

The diagram was produced using Napkin.AI. First I summarised the post down to five bullet points, then I chose from the available Napkin.AI diagrams. As this version of the diagram shows, there’s more to preparing students for an AI-driven future than just prompt engineering.